Difference between revisions of "MC System"

(→Boot Manager) |

(→MC tile) |

||

| (16 intermediate revisions by the same user not shown) | |||

| Line 2: | Line 2: | ||

The many-core version of the project provides different type of tiles, although all share the same network- and coherence-related components. On the networking side, a generic tile of the system features a '''Network Interface''' module and a hardware '''Router''', both described in [[Network|Network architecture]] section. | The many-core version of the project provides different type of tiles, although all share the same network- and coherence-related components. On the networking side, a generic tile of the system features a '''Network Interface''' module and a hardware '''Router''', both described in [[Network|Network architecture]] section. | ||

| − | The | + | The NaplesPU many-core features a shared-memory system, each NPU core has a private L1 data cache, while the L2 cache is spread all over the instantiated tile along with a '''Directory Controller''' module which handles and stores coherence information for cached memory lines. Both are further described in [[Coherence|Coherence architecture]] section. |

| − | == | + | == NPU tile == |

| − | The | + | The NPU tile mainly equipped with a NaplesPU GPGPU core, described in [[Core|NaplesPU GPGPU core]] section, and components that interface the core with both networking and coherence systems. The following figure depicts a block view of the NPU tile: |

| − | [[File:Tile | + | [[File:Tile npu.png|470px]] |

| − | The tile implements a '''Cache controller''', called | + | The tile implements a '''Cache controller''', called <code>l1d_cache</code>, which interfaces the core to the memory system. The Cache controller exploits three Network interface virtual channels dedicated to the coherence system (ID 0 to 2), providing an abstracted view of the coherence to the core. For further details of the module and of the coherence system refer to [[Coherence|Coherence architecture]] section. Two channels are also shared with the Directory controller. |

| − | Host communication, synchronization system and IO request leverage on the last Virtual Channel (ID 3) named Service Virtual Channel. The | + | Host communication, synchronization system and IO request leverage on the last Virtual Channel (ID 3) named Service Virtual Channel. The <code>tile_npu</code> module implements an arbiter which dispatches the incoming message from the service channel to the right module, based on the ''message_type'' field. E.g., a message from the host is marked as HOST and is forwarded to the boot manager module in the tile: |

always @( ni_n2c_mes_service_valid, ni_n2c_mes_service, n2c_sync_message, bm_c2n_mes_service_consumed, sc_account_consumed, n2c_mes_service_consumed, io_intf_message_consumed ) begin | always @( ni_n2c_mes_service_valid, ni_n2c_mes_service, n2c_sync_message, bm_c2n_mes_service_consumed, sc_account_consumed, n2c_mes_service_consumed, io_intf_message_consumed ) begin | ||

| Line 32: | Line 32: | ||

... | ... | ||

| − | In case of SYNCH message, the above arbiter checks if the message is an ACCOUNT or a RELEASE type. In the first case, the service message is dispatched to the Synchronization core, otherwise to the | + | In case of SYNCH message, the above arbiter checks if the message is an ACCOUNT or a RELEASE type. In the first case, the service message is dispatched to the Synchronization core, otherwise to the NPU core on the service interface, which is directly connected to the Barrier core module allocated in the core. |

| − | Possible incoming host requests are defined in the | + | Possible incoming host requests are defined in the <code>npu_message_service_define.sv</code> header file: |

typedef enum logic [`MESSAGE_TYPE_LENGTH - 1 : 0] { | typedef enum logic [`MESSAGE_TYPE_LENGTH - 1 : 0] { | ||

| Line 45: | Line 45: | ||

===C2N Service Scheduler=== | ===C2N Service Scheduler=== | ||

| − | This module stores and serializes requests from modules that attempt to send a message over the Service Virtual Channel. Since this channel has a single entry point, the | + | This module stores and serializes requests from modules that attempt to send a message over the Service Virtual Channel. Since this channel has a single entry point, the <code>c2n_service_scheduler</code> provides a dedicated port to each of the requiring modules and forwards their request to the service virtual channel. When a conflict occurs, this module serializes the conflicting requests, each request is selected by an internal round-robin arbiter. |

===Boot Manager=== | ===Boot Manager=== | ||

| − | Host commands are dispatched to the | + | Host commands are dispatched to the <code>boot_manager</code> module interconnected with the Thread Controller and control registers on the core. This module implements core-side logic for the item interface, described in the [[MC_Item|Item Interface]] section, interacting with the core on the basis of messages from the host. |

===IO Interface=== | ===IO Interface=== | ||

| − | The | + | The <code>io_interface</code> module fetches memory request on the non-coherent memory region and dispatches them to the right IO device allocated within the system. This module mainly converts a memory request into an IO message that will flow over the network. For each memory request from the core, the <code>io_interface</code> module encapsulates essential information, such as requesting address, in an IO message as follow: |

assign io_intf_available_to_core = ~req_almost_full, | assign io_intf_available_to_core = ~req_almost_full, | ||

| Line 68: | Line 68: | ||

[[File:Tile mc.png|470px]] | [[File:Tile mc.png|470px]] | ||

| − | The MC tile interfaces the main memory, which is defined as follow: | + | The MC tile interfaces the system with main memory, which is defined as follow: |

| − | // To | + | // To Memory NI |

output logic [MANGO_ADDRESS_WIDTH - 1 : 0] n2m_request_address, | output logic [MANGO_ADDRESS_WIDTH - 1 : 0] n2m_request_address, | ||

output logic [63 : 0] n2m_request_dirty_mask, | output logic [63 : 0] n2m_request_dirty_mask, | ||

| Line 78: | Line 78: | ||

output logic n2m_avail, | output logic n2m_avail, | ||

| − | // From | + | // From Memory NI |

input logic m2n_request_read_available, | input logic m2n_request_read_available, | ||

input logic m2n_request_write_available, | input logic m2n_request_write_available, | ||

| Line 87: | Line 87: | ||

The memory interface has a simple valid/available handshake and allows read/write operations. | The memory interface has a simple valid/available handshake and allows read/write operations. | ||

| − | The | + | The <code>npu2memory</code> module turns coherence messages from the NPU core into valid memory requests for the interface above: |

assign request_is_valid = |request_oh & enable & ~pending_read; | assign request_is_valid = |request_oh & enable & ~pending_read; | ||

| Line 113: | Line 113: | ||

[[File:Tile h2c.png|470px]] | [[File:Tile h2c.png|470px]] | ||

| − | The H2C tile interfaces the system with the host through the Item interface. The | + | The H2C tile interfaces the system with the host through the Item interface. The <code>npu_item_iternface</code> interprets incoming item from the host and builds service packets marked as HOST type which encapsulates the command sent by the host. One the packet is ready, the <code>npu_item_interface</code> forwards it to the destination tile through the service network. Section [[MC_Item|Item Interface]] details service packets sent by this module. |

== NONE tile == | == NONE tile == | ||

Latest revision as of 14:55, 2 July 2019

The many-core version of the project provides different type of tiles, although all share the same network- and coherence-related components. On the networking side, a generic tile of the system features a Network Interface module and a hardware Router, both described in Network architecture section.

The NaplesPU many-core features a shared-memory system, each NPU core has a private L1 data cache, while the L2 cache is spread all over the instantiated tile along with a Directory Controller module which handles and stores coherence information for cached memory lines. Both are further described in Coherence architecture section.

Contents

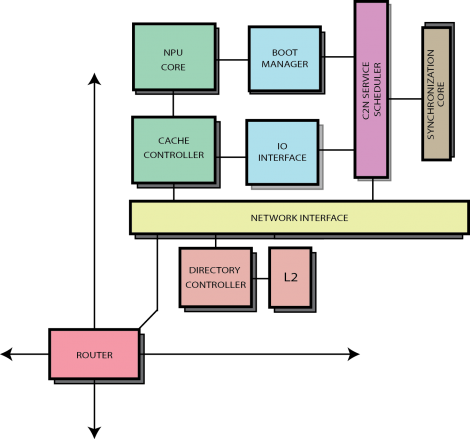

NPU tile

The NPU tile mainly equipped with a NaplesPU GPGPU core, described in NaplesPU GPGPU core section, and components that interface the core with both networking and coherence systems. The following figure depicts a block view of the NPU tile:

The tile implements a Cache controller, called l1d_cache, which interfaces the core to the memory system. The Cache controller exploits three Network interface virtual channels dedicated to the coherence system (ID 0 to 2), providing an abstracted view of the coherence to the core. For further details of the module and of the coherence system refer to Coherence architecture section. Two channels are also shared with the Directory controller.

Host communication, synchronization system and IO request leverage on the last Virtual Channel (ID 3) named Service Virtual Channel. The tile_npu module implements an arbiter which dispatches the incoming message from the service channel to the right module, based on the message_type field. E.g., a message from the host is marked as HOST and is forwarded to the boot manager module in the tile:

always @( ni_n2c_mes_service_valid, ni_n2c_mes_service, n2c_sync_message, bm_c2n_mes_service_consumed, sc_account_consumed, n2c_mes_service_consumed, io_intf_message_consumed ) begin

...

if (ni_n2c_mes_service_valid) begin

if( ni_n2c_mes_service.message_type == HOST ) begin

bm_n2c_mes_service.data = ni_n2c_mes_service.data;

bm_n2c_mes_service_valid = ni_n2c_mes_service_valid;

c2n_mes_service_consumed = bm_c2n_mes_service_consumed;

end else if ( ni_n2c_mes_service.message_type == SYNC ) begin

if ( n2c_sync_message.sync_type == ACCOUNT ) begin

ni_account_mess = n2c_sync_message.sync_mess.account_mess;

ni_account_mess_valid = ni_n2c_mes_service_valid;

c2n_mes_service_consumed = sc_account_consumed;

end else if ( n2c_sync_message.sync_type == RELEASE ) begin

n2c_release_message = n2c_sync_message.sync_mess.release_mess;

n2c_release_valid = ni_n2c_mes_service_valid;

c2n_mes_service_consumed = n2c_mes_service_consumed;

end

...

In case of SYNCH message, the above arbiter checks if the message is an ACCOUNT or a RELEASE type. In the first case, the service message is dispatched to the Synchronization core, otherwise to the NPU core on the service interface, which is directly connected to the Barrier core module allocated in the core.

Possible incoming host requests are defined in the npu_message_service_define.sv header file:

typedef enum logic [`MESSAGE_TYPE_LENGTH - 1 : 0] {

HOST = 0,

SYNC = 1,

IO_OP = 2

} service_message_type_t;

While Synchronization Core is widely described in the Synchronization architecture section, in the remains of this section we will focus on the other modules that rely on the Service Virtual Channel.

C2N Service Scheduler

This module stores and serializes requests from modules that attempt to send a message over the Service Virtual Channel. Since this channel has a single entry point, the c2n_service_scheduler provides a dedicated port to each of the requiring modules and forwards their request to the service virtual channel. When a conflict occurs, this module serializes the conflicting requests, each request is selected by an internal round-robin arbiter.

Boot Manager

Host commands are dispatched to the boot_manager module interconnected with the Thread Controller and control registers on the core. This module implements core-side logic for the item interface, described in the Item Interface section, interacting with the core on the basis of messages from the host.

IO Interface

The io_interface module fetches memory request on the non-coherent memory region and dispatches them to the right IO device allocated within the system. This module mainly converts a memory request into an IO message that will flow over the network. For each memory request from the core, the io_interface module encapsulates essential information, such as requesting address, in an IO message as follow:

assign io_intf_available_to_core = ~req_almost_full,

req_value_i.io_source = tile_id_t'(TILE_ID),

req_value_i.io_thread = ldst_io_thread,

req_value_i.io_operation = io_operation_t'(ldst_io_operation),

req_value_i.io_address = ldst_io_address,

req_value_i.io_data = ldst_io_data;

IO messages from such a component are always forwarded to the H2C tile:

assign dest_tile_idx = issue_req ? `TILE_H2C_ID : saved_mess.io_source;

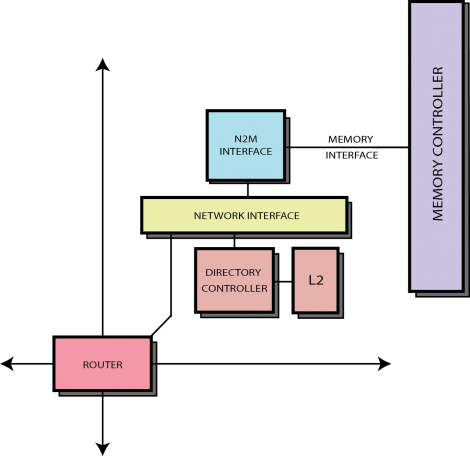

MC tile

The MC tile interfaces the system with main memory, which is defined as follow:

// To Memory NI output logic [MANGO_ADDRESS_WIDTH - 1 : 0] n2m_request_address, output logic [63 : 0] n2m_request_dirty_mask, output logic [MANGO_DATA_WIDTH - 1 : 0] n2m_request_data, output logic n2m_request_read, output logic n2m_request_write, output logic n2m_request_is_instr, output logic n2m_avail, // From Memory NI input logic m2n_request_read_available, input logic m2n_request_write_available, input logic m2n_response_valid, input logic [MANGO_ADDRESS_WIDTH - 1 : 0] m2n_response_address, input logic [MANGO_DATA_WIDTH - 1 : 0] m2n_response_data

The memory interface has a simple valid/available handshake and allows read/write operations.

The npu2memory module turns coherence messages from the NPU core into valid memory requests for the interface above:

assign request_is_valid = |request_oh & enable & ~pending_read; assign request_is_read = request_is_valid & ( pending_fwd_request_out.packet_type == FWD_GETS | pending_fwd_request_out.packet_type == FWD_GETM ) & grant_oh[1]; assign request_is_write = m2n_request_write_available & ni_response_network_available & ~pending_resp_fifo & request_is_valid & pending_response_out.packet_type == WB & grant_oh[0];

This module also provides endianness swapping supported if required:

if (SWAPEND) begin

for ( swap_word = 0; swap_word < 16; swap_word++ ) begin : swap_word_gen

assign m2n_response_data_swap[swap_word * 32 +: 8] = m2n_response_data[swap_word * 32 + 24 +: 8];

assign m2n_response_data_swap[swap_word * 32 + 8 +: 8] = m2n_response_data[swap_word * 32 + 16 +: 8];

assign m2n_response_data_swap[swap_word * 32 + 16 +: 8] = m2n_response_data[swap_word * 32 + 8 +: 8];

assign m2n_response_data_swap[swap_word * 32 + 24 +: 8] = m2n_response_data[swap_word * 32 +: 8];

assign out_data_swap[swap_word * 32 +: 8] = pending_response_out.data[swap_word * 32 + 24 +: 8];

assign out_data_swap[swap_word * 32 + 8 +: 8] = pending_response_out.data[swap_word * 32 + 16 +: 8];

assign out_data_swap[swap_word * 32 + 16 +: 8] = pending_response_out.data[swap_word * 32 + 8 +: 8];

assign out_data_swap[swap_word * 32 + 24 +: 8] = pending_response_out.data[swap_word * 32 +: 8];

end

Parameter SWAPEND determines whether the swapping logic is allocated or not.

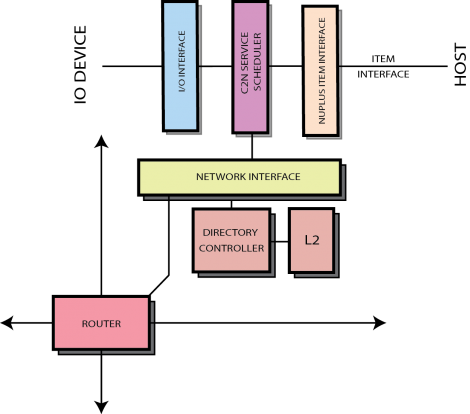

H2C tile

The H2C tile interfaces the system with the host through the Item interface. The npu_item_iternface interprets incoming item from the host and builds service packets marked as HOST type which encapsulates the command sent by the host. One the packet is ready, the npu_item_interface forwards it to the destination tile through the service network. Section Item Interface details service packets sent by this module.

NONE tile

Empty tile, a NONE tile allocates only shared network- and coherence-related components.

HT tile

Described in Heterogeneous Tile section.